I… am a weird guy (but then again, that isn’t news, hehe). And weird people sometimes have weird pet-peeves. Melted cheese on Italian food and hamburgers? Great! I’ll take two. Melted cheese anywhere else? Blasphemy! That obviously extends to Statistics. And one of my weird pet peeves (which was actually strong enough to prompt me to write it as a chapter for my dissertation and a subsequent published article) is when people conflate the Pearson and the Spearman correlation.

The theory of what I am going to talk about is developed in said article but when I was cleaning more of my computer files I found an interesting example that didn’t make it there. Here’s the gist of it:

I really don’t get why people say that the Spearman correlation is the ‘robust’ version or alternative to the Pearson correlation. Heck, even if you simply google the words Spearman correlation the second top hit reads

Spearman’s rank-order correlation is the nonparametric version of the Pearson product-moment correlation

When I read that, my mathematical mind immediately goes to “if this is the non-parametric version of the Pearson correlation, that means it also estimates the same population parameter”. And honest to G-d (don’t quote me on that one, though. But I just *know* it’s true) I feel like the VAST MAJORITY of people think exactly that about the Spearman correlation. And I wouldn’t blame them either… you can’t open an intro textbook for social scientists that doesn’t have some dubious version of the previous statement. “Well” – the reader might think- “if that is not true then why aren’t more people saying it?” The answer is that, for better or worse, this is one of those questions that’s very simple but the answer is mathematically complicated. But here’s the gist of it (again, for those who like theory like I do, read the article).

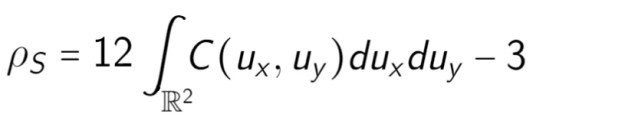

The Spearman rank correlation is defined, in the population, like this:

where the are uniformly-distributed random variables and the

is the copula function that relates them (more on copulas here.) By defining the Spearman rank correlation in terms of the lower-dimensional marginals it co-relates (i.e. the u’s) and the copula function, it becomes apparent that the overlap with the Pearson correlation depends entirely on what

is. Actually, it is not hard to show that if

is a Gaussian copula, and the marginals are normal, then the following identity can be derived:

That relates the Pearson correlation to the Spearman correlation. We’ve known this since the times of Pearson because he came up with it (albeit not explicitly) and called it the “grade correlation”. But from this follows the obvious. An identity such as the one described above need not exist. There could be copula functions for which the Spearman and Pearson correlation have a crazy, wacky relationship… and that is what I am going to show you today.

I don’t remember why but I didn’t include this example in the article, but it is a very efficient one. It shows a case where the Spearman correlation is close to 1 but the Pearson correlation is close to 0 (obviously within sampling error).

Define ,

and

. if you use R to simulate a very large sample (say 1 million) then you can find the following Spearman and Pearson correlations:

N <- 1000000

Z <- rnorm(N, mean=0, sd=0.1)

X <- Z^201

Y <- exp(Z)

> cor(X,Y, method="pearson")

[1] 0.004009381

> cor(X,Y, method="spearman")

[1] 0.9963492

The trick for this example is to notice that the rate of change of is microscopic and oscillating around 0 whereas the change in

is fairly small and oscillating around 1.

In any case, the point being that even without being necessarily too formal, it’s not overly difficult to see that the Spearman correlation is its own statistic and estimates its own population parameter that may or may not have anything to do with the Pearson correlation, depending on the copula function describing the bivariate distribution.

You must be logged in to post a comment.