::UPDATE::

A published article introducing this app is now online in BMC-Medical Research Methodology. If you plan on using this app, it would be a good idea to cite it 😉

The relationship between statistical power and predictor distribution in multilevel logistic regression: a simulation-based approach

WARNING (1): This app can take a little while to run. Do not close your web browser unless it gives you an error. If it appears ‘stuck’ but you haven’t got an error it means the simulation is still running on the background.

WARNING (2): If you keep getting a ‘disconnected from server’ error, close down your browser and open a new window. If the problem still persists that means too many people have tried to access it during the day and the server has shut down. This app is hosted on a free server and it can only accommodate a certain number of people every day.

This app will perform computer simulations to estimate power for multilevel logistic regression models allowing for continuous or categorical covariates/predictors and their interaction. The continuous predictors come in two types: normally distributed or skewed (i.e. χ2 with 1 degree of freedom). It currently only supports binary categorical covariates/predictors (i.e. Bernoulli-distributed) but with the option to manipulate the probability parameter p to simulate imbalance of the groups.

The app will give you the power for each individual covariate/predictor AND the variance component for the intercept (if you choose to fit a random-intercept model) or the slope (if you choose to fit a model with both a random intercept and a random slope). It uses the Wald test statistic for the fixed effect predictors and a 1-degree-of-freedom likelihood-ratio test for the random effects (← yes, I know this is conservative but it’s the fastest one to implement).

When you open the app, here’s how it looks:

What **you**, as the user, need to provide is the following:

The Level 1 and Level 2 sample sizes. If I were to use the ubiquitous example of “children in schools” the Level 1 sample would be the children (individuals within a cluster) and the Level 2 sample would be the schools (number of clusters). For demonstration purposes here I’m asking for groups of 50 ‘children’ in 10 ‘schools’ for a total sample size of 50×10 = 500 children.

The variance for the random effects. You can either choose to fit an intercept-only model (so no variance of the slope) or a random intercept AND random slope model. You cannot fit a random-slope only model here and you cannot set the variances at 0 to fit a single-level logistic regression (there’s other software to do power analysis for single-level logistic regression). At least the variance of the intercept needs to be specified. Notice that the app defaults to an intercept-only model and under ‘Select Covariate’ it will say ‘None’. That changes when you click on the drop-down menu where it gives you the option of which random slope do you want. Notice that you can only choose one predictor to have a random slope. Will work on the general case in the future.

The number of covariates (or predictors) which I believe is pretty self-explanatory. Just notice that the more covariates you add, the longer it will take for the simulation to run. The default in the app is 2 covariates.

This would be the core of the simulation engine because the user needs to specify:

- Regression coefficients (‘Beta’). This space lets the user specify the effect size for the regression coefficients under investigation. The default is 0.5 but that can be changed to any number. In the absence of any outside guidance, Cohen’s small-medium-large effect sizes are recommended. Remember that the regression coefficient for binary predictors is conceptualized as a standardized mean difference so it should be in Cohen’s d metric.

- Level of the predictor (‘Level’). It only supports 2-level models so the options are ‘1’ or ‘2’. This section indicates whether a predictor belongs to the Level 1 sample (e.g. the ‘children’) or the Level 2 sample (e.g. the ‘school). Notice that whichever predictor gets assigned a random slope MUST also be selected as Level 1. Otherwise the power analysis results will not make sense. It currently only supports one predictor at the Level 1 with a random slope. Other predictors can be included at Level 1 but they won’t have the option for a random slope component.

- Distribution of the covariates (‘Distribution’). Offers 3 options: normally-distributed, skewed (i.e. χ2 with 1 degree of freedom or a skew of about √8) and binary/Bernoulli-distributed. For the binary predictor the user can change the population parameter p and create imbalance between the groups. So, for instance, if p=0.3 then 30% of the sample would belong to the group labelled as ‘1’ and 70% to the group labelled as ‘0’. The default for this option is 0.5 to create an even 50/50 split.

- Intercept (‘Intercept Beta’). Lets the user define the intercept for the regression model. The default is 0 and I wouldn’t recommend changing it unless you’re making inferences about the intercept of the regression model.

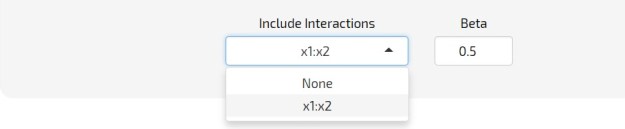

Once the number of covariates has been selected, the app will offer the user all possible 2-way interaction effects irrespective of the level of the predictor and distribution characteristics. The user can select whichever 2-way interaction is of interest and assign an effect size/regression coefficient (i.e. ‘Beta’). The app will use this effect size to calculate power. Notice that the distribution of the interaction is fully defined by the distribution of its constituting main effects.

The number of datasets generated using the population parameters previously defined by the researcher. The default is 10 but I would personally recommend a minimum of 100. The larger the number of replications the more accurate the results will be but also the longer the simulation will take.

The simulated power is calculated as the proportion of statistically significant results out of the number of simulated datasets and will be printed here. Notice the time progress bar indicating that the simulation is still running. For a 2-covariate model with both a random effect for the intercept and the slope the simulation took almost 3 min to run. Expect longer waiting times if the model has lots of covariates.

This is what a sample of a full power analysis looks like. The estimated power can be found under the column ‘Power’. The column labelled ‘NA’ shows the proportion of models that did not converge. In this case, all models converged (there are 0s all throughout the NA column) but the power of the fixed and random effects is relatively low with the exception of the power for the variance of the random intercept. In this example one would need to either increase the effect size from 0.5 to something larger or increase the Level 1 and Level 2 sample sizes in order to obtain acceptable power levels of 80%. You can either download your power analysis results as a .csv file or copy-paste them by clicking on the appropriate button.

Finally, here is the link for the shiny web app:

YOU CAN CLICK HERE TO ACCESS THE APP